95% of GenAI pilots fail to reach production. Discover why AI transformation failure is the default outcome in 2026, what Amara’s Law reveals about the hype cycle, and the 5 decisions that separate AI winners from expensive casualties.

Here’s something nobody is saying out loud in your next board meeting: your 47th AI pilot isn’t a sign of progress. It’s a warning.

In 2026, companies have never invested more in AI. Projections put global AI spend at $1.5 trillion. 88% of enterprises say they’re “actively adopting AI.” And yet — according to an MIT NANDA Initiative study — 95% of enterprise generative AI pilots never make it to production. That’s not a rounding error. That’s a structural problem.

The question isn’t whether AI transformation failure is happening. The question is why — and more importantly, what separates the 5% who actually get this right from everyone else.

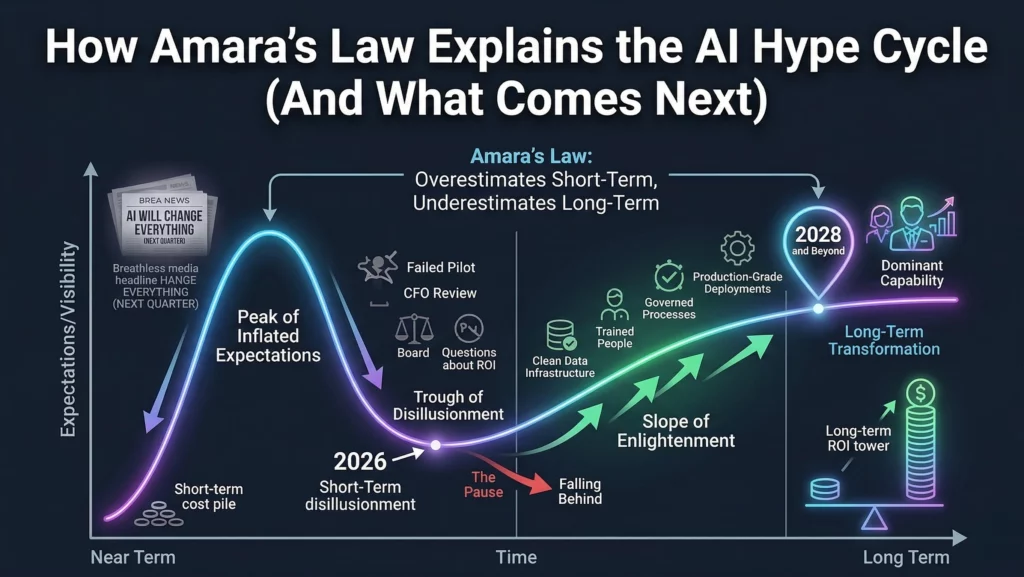

There’s a 50-year-old principle from a computer scientist named Roy Amara that explains exactly what’s going on. And once you understand it, the chaos of your AI roadmap will suddenly make a lot more sense.

Let’s be honest. When you read “95% of AI pilots fail,” your first instinct is probably to assume your company is the exception. It’s not.

Research from RAND Corporation shows that 80.3% of AI projects across industries fail to deliver measurable business value. A separate analysis found that 73% of companies launched AI initiatives without any clear success metrics defined upfront. You read that right — nearly three-quarters of organisations started building before they knew what winning even looked like.

This isn’t about bad technology. The models work. The vendors are capable. What breaks is everything around the model — strategy, data, people, governance — and most leadership teams never see the collapse coming because they’re measuring the wrong thing: pilot count instead of production value.

There’s a phrase making the rounds in enterprise AI circles right now: pilot purgatory. It describes companies that have launched 30, 50, sometimes 900 AI pilots — and have nothing in production to show for it.

It’s not that the pilots failed dramatically. Most of them looked fine in the demo. They just never shipped. Never scaled. Never created the ROI the board was promised.

The transition from “pilot mania” to portfolio discipline is one of the most critical shifts an enterprise AI leader can make. Without it, you’re essentially paying consultants to run experiments with no path to production.

Roy Amara was a researcher at the Institute for the Future. His observation — now called Amara’s Law — is deceptively simple:

“We tend to overestimate the effect of a technology in the short run and underestimate the effect in the long run.”

That’s it. Two sentences. And they explain virtually every major technology cycle from the internet boom to the AI hype wave you’re living through right now.

In 2023 and 2024, boards across every industry watched ChatGPT go viral and immediately demanded their organisations “become AI companies.” CTOs were given 90-day mandates. Vendors promised ROI in weeks. Strategy was replaced by speed.

This is AI-induced FOMO — and it’s the most dangerous force in enterprise technology right now. Executives under board pressure are making architecture decisions that should take months in days. They’re buying tools before defining problems. They’re prioritising the announcement over the outcome.

Amara’s Law calls this exactly: we overestimate what AI will do for us in the short term. We expect transformation in a quarter. We get a pilot deck and a vendor invoice.

If you recognise your organisation in this, the FOMO trap is worth understanding in detail — because the antidote isn’t slowing down AI adoption, it’s redirecting it toward your most concrete business problems.

Here’s the other side of Amara’s Law that almost nobody talks about: the underestimation problem.

While companies are busy burning budget on pilots that won’t scale, they’re simultaneously underestimating what AI will actually do to their industry over the next decade. The organisations that treat 2026 as the year to “pause and reassess” will spend 2030 trying to catch up to competitors who used the disillusionment phase to quietly build real capability.

Real impact doesn’t come from the strength of an announcement. It comes from an organisation’s ability to embed technology into its daily operations, structures, and decision-making. That work — the 70% of AI transformation that isn’t about the model at all — takes 18 to 24 months to start producing results and 2 to 4 years for full enterprise transformation.

Most organisations aren’t thinking in those timelines. They’re thinking in sprints.

This is the root cause of most AI transformation failures, and it’s surprisingly common even in technically sophisticated organisations.

When AI sits inside the IT department — with a technology roadmap, technology KPIs, and technology leadership — it gets optimised for the wrong things. Speed of deployment. Number of models trained. API integration counts.

None of those metrics tell you whether your sales team is closing more deals, whether your supply chain is more resilient, or whether your customer service costs have dropped. AI is a business transformation project that uses technology. The moment your team forgets that, you’ve already started losing.

You cannot build reliable AI on unreliable data. This sounds obvious. It apparently isn’t.

According to Gartner, 60% of AI projects are expected to be abandoned through 2026 specifically because organisations lack AI-ready data. 63% of companies don’t have the right data practices in place before they start building. This is what we call the 3-week number change crisis — when your AI model gives you an answer today and a different answer next week because the underlying data infrastructure isn’t governed.

You can have the best model in the world. If your data is messy, siloed, or ungoverned, your AI will be too.

Most organisations choose their AI vendor before they’ve defined their AI strategy. They select their model before they’ve mapped their use cases. They build before they’ve asked: what problem are we actually solving, and how will we know when we’ve solved it?

Strategy is the boring part. It doesn’t generate vendor demos or executive LinkedIn posts. But it’s the only thing that ensures your technology investment creates business value instead of interesting experiments.

The real question isn’t “which AI tools should we buy?” It’s “what are the three business outcomes that would move the needle most, and what would it take to achieve them?”

AI transformation requires sustained senior leadership attention. Not a kick-off keynote. Not a quarterly update slide. Sustained, active sponsorship that allocates budget, clears organisational blockers, and ties AI progress to business metrics that executives actually care about.

What typically happens: a CTO or CHRO champions an AI initiative, builds initial momentum, and then gets pulled into operational fires. The AI programme loses its air cover. Middle management optimises for their existing incentives. The pilot sits on the shelf.

Without a named executive owner who is personally accountable for AI ROI — not just AI activity — your programme will stall. Every time.

Here’s the catch: pilots are easy to celebrate. They’re contained, low-risk, and usually involve enthusiastic early adopters who make the demos look great.

Production is hard. It involves legacy systems, resistant end-users, change management, governance, and a long tail of edge cases the pilot never encountered. Most organisations aren’t equipped or incentivised — to do that hard work.

The result? The pilot dashboard fills up. The production deployment count stays at zero. And leadership keeps approving new pilots because that’s the only visible sign of progress they have.

Stop measuring AI success by the number of pilots. Start measuring it by production deployments, adoption rates, and business value delivered.

`

`

If you map the current enterprise AI landscape onto the Gartner Hype Cycle, we’re clearly in the Trough of Disillusionment. The breathless “AI will change everything by next quarter” headlines are giving way to CFO reviews, failed pilots, and board-level questions about ROI.

This is exactly what Amara’s Law predicts. The short-term expectations were wildly inflated. Reality has set in. And a significant number of organisations are now considering pulling back from AI investment entirely.

That would be a mistake.

Here’s what Amara’s Law also tells us: the long-term impact of AI is being underestimated right now — especially by the organisations using today’s disillusionment as a reason to pause.

The companies that use 2026 to build real AI capability — clean data infrastructure, trained people, governed processes, production-grade deployments — will be operating at a fundamentally different level of capability by 2028 and beyond. Their competitors who paused will be playing catch-up in a market where the gap compounds.

The first 60 minutes of your AI deployment decisions determine your 10-year ROI more than any other factor. Get the foundation right now, and you’re positioning yourself for the long-term transformation that Amara’s Law guarantees will come.

Every successful AI transformation we’ve seen starts with a simple question: what would have to be true for our business to be meaningfully better in 12 months?

Not “how can we use LLMs?” Not “what can we automate?” Start with the outcome. Work backwards to the capability. Then decide whether AI is the right tool to get there. Sometimes it isn’t — and that’s a useful answer too.

BCG’s research is clear on this: AI success is 10% algorithms, 20% data and technology, and 70% people, process, and culture transformation. Most organisations invest exactly backwards — 70% on the model and 30% on everything else.

Your AI will only be as good as the humans who adopt it, govern it, and continuously improve it. Change management isn’t a nice-to-have. It’s the majority of the work.

Think of AI transformation like building a factory, not running an experiment. A factory has inputs (data), processes (models and pipelines), quality control (governance and monitoring), and outputs (business value).

Building the AI factory means creating the infrastructure for continuous AI delivery — not launching one-off pilots. It means MLOps, data governance, model monitoring, retraining pipelines, and end-user feedback loops. It’s less exciting than a ChatGPT integration. It’s also the only thing that actually scales.

Portfolio discipline means treating AI initiatives like a venture portfolio: a few bets on transformational use cases, a handful on incremental improvements, and a clear kill criteria for anything that isn’t moving toward production within a defined timeframe.

It also means saying no. No to the 48th pilot. No to the vendor demo that doesn’t map to a business outcome. No to the impressive-sounding use case that nobody in operations has asked for.

The discipline to stop starting things is just as important as the capability to ship them.

Let’s reframe this. Amara’s Law isn’t a pessimistic view of AI. It’s a realistic one.

The organisations panicking about 95% failure rates and abandoning AI entirely are making the same mistake as the ones launching 900 pilots. They’re optimising for the short term — either by doubling down on hype or retreating from it.

The real opportunity is recognising exactly where we are: in the Trough of Disillusionment, which is precisely where the foundation work that drives long-term transformation gets done.

The AI transformation you build in 2026 — on real data, with real people, solving real business problems — is the transformation that compounds for the next decade.

Stop counting pilots. Start building the capability to ship AI that actually matters.

Ready to move from pilot mania to production value? Ai Ranking helps enterprise leaders design AI transformation strategies built for the long term — not the next board deck. Let’s talk.

How can you supercharge your business with bespoke solutions and products.