Let’s be honest you can’t teach an AI agent to do work that nobody can explain clearly. And that’s the exact trap most organizations walk into when deploying AI agents.

The promise sounds incredible: autonomous agents handling customer inquiries, processing approvals, managing workflows all while you sleep. But here’s the catch nobody mentions in the sales pitch: AI agents are only as good as the documentation they’re trained on. And in most enterprises, that documentation was written by humans, for humans, years ago and it hasn’t kept up with how work actually gets done today.

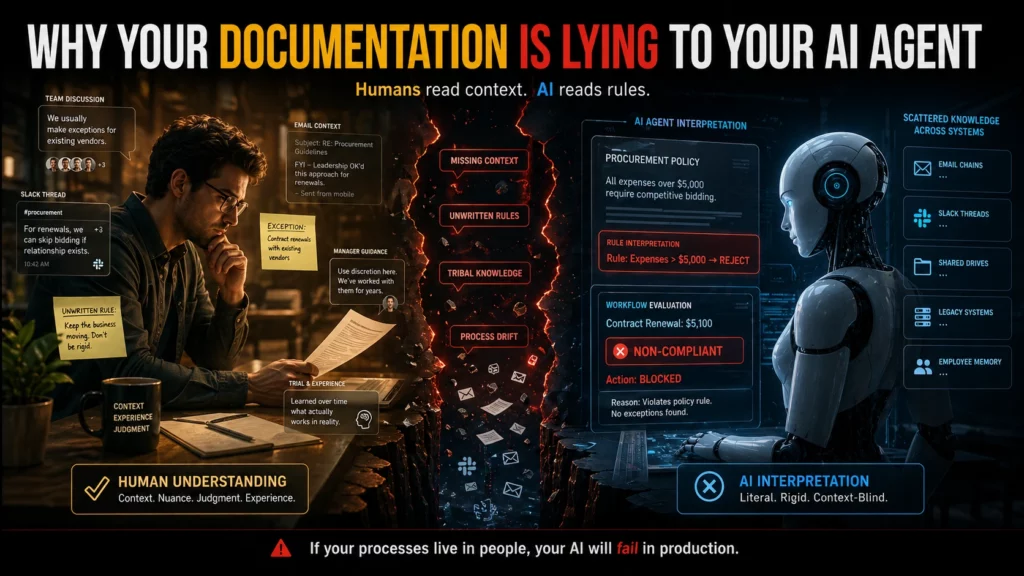

This is the documentation reality gap. Your official process says one thing. Your team does something completely different. And when you hand those outdated documents to an AI agent and tell it to “just follow the process,” you’re not automating efficiency. You’re scaling chaos.

Process documentation in most enterprises is in terrible shape. Not because anyone intended it that way but because documentation is treated as a compliance checkbox, not a living operational asset.

According to recent research, only 16% of organizations report having extremely well-documented workflows. That means 84% of companies are trying to deploy AI agents on shaky foundations. Even more telling: 49% of organizations admit that undocumented or ad-hoc processes impact their efficiency regularly.

Think about that for a second. Half of all businesses know their processes aren’t properly documented yet they’re still attempting to hand those same processes to autonomous AI systems and expecting success.

The numbers tell the brutal truth: between 80% and 95% of enterprise AI projects fail to deliver meaningful ROI. And while there are multiple reasons for failure, documentation mismatch sits at the core of most disasters.

Here’s what most people don’t realize: your company’s documentation wasn’t designed to be machine-readable. It was written by someone who understood the context, the history, the unwritten rules, and the exceptions that “everyone just knows.”

An employee reading your procurement policy understands that when it says “expenses over $5,000 require competitive bidding,” there’s an implicit exception for contract renewals with existing vendors. They know this because someone told them during onboarding, or they watched how their manager handled it, or they learned it through trial and error.

An AI agent reading that same policy? It sees an absolute rule. No exceptions. So when a $5,100 contract renewal comes through, the agent flags it as non-compliant — blocking a routine business transaction and creating unnecessary friction.

Scattered knowledge across multiple systems makes this problem exponentially worse. When your actual processes live in Slack threads, email chains, and the heads of employees who’ve been there for years, no amount of AI sophistication can bridge that gap.

Even when organizations start with good documentation, there’s another silent killer: configuration drift.

Your systems evolve. Workflows get updated. Teams find workarounds. Exceptions become standard practice. And nobody updates the documentation to reflect reality.

Pavan Madduri, a senior platform engineer at Grainger whose research focuses on governing agentic AI in enterprise IT, points to this as the core flaw in vendor promises that agents can “learn from observing existing workflows.” Observation without context creates incomplete understanding. The agent might replicate the workflow but it won’t understand why the workflow works that way, or when it should deviate.

ServiceNow and similar platforms tout their ability to learn from years of workflows that have run through their systems. The idea is elegant: no documentation required because the agent learns by watching. But that only works if those workflows were correct in the first place and if they haven’t drifted over time into something the original architects wouldn’t recognize.

This isn’t a theoretical problem. Organizations are losing real money and credibility because their AI agents are following outdated or incomplete documentation.

New York City’s MyCity chatbot became infamous for giving businesses illegal advice telling them they could take workers’ tips, refuse tenants with housing vouchers, and ignore cash acceptance requirements. All violations of actual law. The bot confidently dispensed this misinformation for months after the problems were reported, because its documentation didn’t match legal reality.

Air Canada’s chatbot promised customers a discount policy that didn’t exist, and when a customer held the company to it, a Canadian court ruled that Air Canada was liable for what its agent said. The precedent is worth millions and it’s just the beginning.

In enterprise settings, the damage is often less public but equally expensive. An agent that misinterprets a procurement policy can lock up legitimate transactions. An agent that follows outdated security documentation can create vulnerabilities. An agent that executes based on old workflow diagrams can route approvals to the wrong people, delay critical decisions, or expose sensitive information to unauthorized users.

When your documentation lies about how processes actually work, AI agents don’t just fail — they fail at scale, with speed and consistency that human error could never match.

Most enterprise documentation was written for humans who can:

AI agents can’t do any of that. They need documentation that is:

The gap between these two documentation styles is where most AI agent failures originate. You hand the agent a human-friendly PDF and expect machine-level precision. It doesn’t work.

Here’s another pattern that kills AI implementations: when different teams maintain different versions of the “same” process.

Your HR handbook says remote work is encouraged. Your security policy says VPN access for customer data is restricted. Your IT operations guide has a third set of rules. An employee navigating this knows how to synthesize these documents and make a judgment call. An AI agent sees conflicting instructions and either freezes, picks one arbitrarily, or applies the wrong policy in the wrong context.

Why scattered knowledge silently sabotages your AI readiness comes down to this: when there’s no single source of truth, agents can’t learn what “correct” means. They see multiple versions of reality and have no reliable way to choose.

This creates what researchers call “context blindness” when agent responses don’t match your own documentation because the agent is pulling from outdated, incomplete, or conflicting sources.

If you’re planning to deploy AI agents or already struggling with implementations that aren’t working — here’s what needs to happen:

Audit your actual processes, not your documented processes. Shadow employees doing the work. Record what they actually do, not what the handbook says they should do. The delta between those two is your documentation debt and it needs to be paid before AI can help.

Map where your process documentation lives. Is it in SharePoint? Confluence? Google Docs? Slack channels? Tribal knowledge? If it’s scattered across multiple systems and formats, consolidate it. Agents need a single, authoritative source they can query reliably.

Version control everything. Your documentation should have the same rigor as your code. Track changes. Review updates. Deprecate outdated versions clearly. An agent following last year’s documentation is worse than an agent with no documentation because it’s confidently wrong.

Document exceptions explicitly. That “everyone just knows” exception? Write it down. Define when it applies. Provide examples. AI agents don’t have institutional memory. If it’s not in the documentation, it doesn’t exist.

Test your documentation with someone who’s never done the job. If they can follow your process documentation from start to finish without asking clarifying questions, you’re close to machine-readable. If they get stuck, confused, or need to make judgment calls based on context clues, your documentation isn’t ready for AI.

Implement continuous documentation maintenance. Every time a process changes, the documentation changes. Not “when someone gets around to it” immediately. Treat documentation like production code: changes require reviews, approvals, and deployment tracking.

Here’s the question vendors won’t ask you, but you need to ask yourself: can you describe your critical processes completely and accurately, without relying on “that’s just how we’ve always done it”?

If the answer is no or if there’s significant disagreement among your team about what the “right” process actually is you’re not ready for AI agents. You don’t have a technology problem. You have an organizational clarity problem.

And that’s actually good news, because organizational clarity problems can be fixed. They just need to be fixed before you hand your processes to an autonomous system and tell it to execute at scale.

The future of enterprise documentation isn’t just writing better documents. It’s designing documentation systems that serve both human and machine readers effectively.

This means:

Some organizations are experimenting with AI agents to help maintain documentation using agents to identify drift, flag inconsistencies, and suggest updates based on observed workflows. It’s recursive, yes: using AI to fix the documentation that AI needs to function. But it’s also pragmatic.

Eugene Petrenko documented how 16 AI agents helped refactor documentation for other AI agents to use. The key insight? Documentation quality improved dramatically when evaluated by AI readers instead of human assumptions about what AI needs. The metrics were clear: documents scored 7.0 before refactoring jumped to 9.0 after because the team finally understood what “machine-readable” actually meant.

Organizations rushing to deploy AI agents without fixing their documentation foundations are making an expensive bet. They’re wagering that AI sophistication can overcome organizational chaos. It can’t.

Poor documentation doesn’t become less of a problem when you add AI. It becomes a bigger one. As one practitioner put it: “If you have clean, structured, well-maintained processes, AI makes those faster and easier. If you have chaos, undocumented workarounds, inconsistent data, AI compounds that too. Runs your broken process faster and at higher volume than you ever could manually.”

The agent doesn’t resolve the documentation gap. It scales it.

This is why only 26% of organizations that have implemented AI agents rate them as “completely successful.” The technology works. But the foundations don’t.

Organizations that succeed with AI agents share a common pattern: they invested in documentation excellence before they deployed the first agent.

Snowflake took a data-first approach to AI implementation. Instead of rushing to deploy AI tools across the organization, the company built robust data infrastructure and documentation that AI systems could trust. David Gojo, head of sales data science at Snowflake, emphasizes that successful AI deployments require “accurate, timely information that AI systems can trust.”

The result? AI tools that sales teams actually adopted because the recommendations were backed by reliable data and clear documentation, not generating false confidence from incomplete information.

If you’re considering AI agents, start with an honest documentation audit. Not the audit where you check if documentation exists the audit where you test if it reflects reality.

Walk through your critical processes. Compare what’s documented to what actually happens. Identify the gaps. Quantify the drift. And be brutally honest about whether your organization can articulate its processes clearly enough for a machine to follow them.

Because here’s the hard truth: if your documentation doesn’t match reality, your AI agents will fail. Not eventually. Immediately. And the failure will be loud, expensive, and difficult to fix after the fact.

The good news? This is fixable. Documentation debt can be paid down. Processes can be clarified. Knowledge can be consolidated. But it needs to happen before you deploy agents — not after they’ve already scaled your broken processes to catastrophic proportions.

The question isn’t whether your organization will invest in documentation quality. The question is whether you’ll do it before or after your AI agents fail publicly.

How can you supercharge your business with bespoke solutions and products.