Your company just invested six figures in AI agents. The promise? Automated workflows, instant answers, lightning-fast decisions. The reality? Your agents keep giving wrong answers, missing critical information, and frustrating your team more than helping them.

Here’s the thing most people miss: It’s not the AI that’s failing. It’s your knowledge.

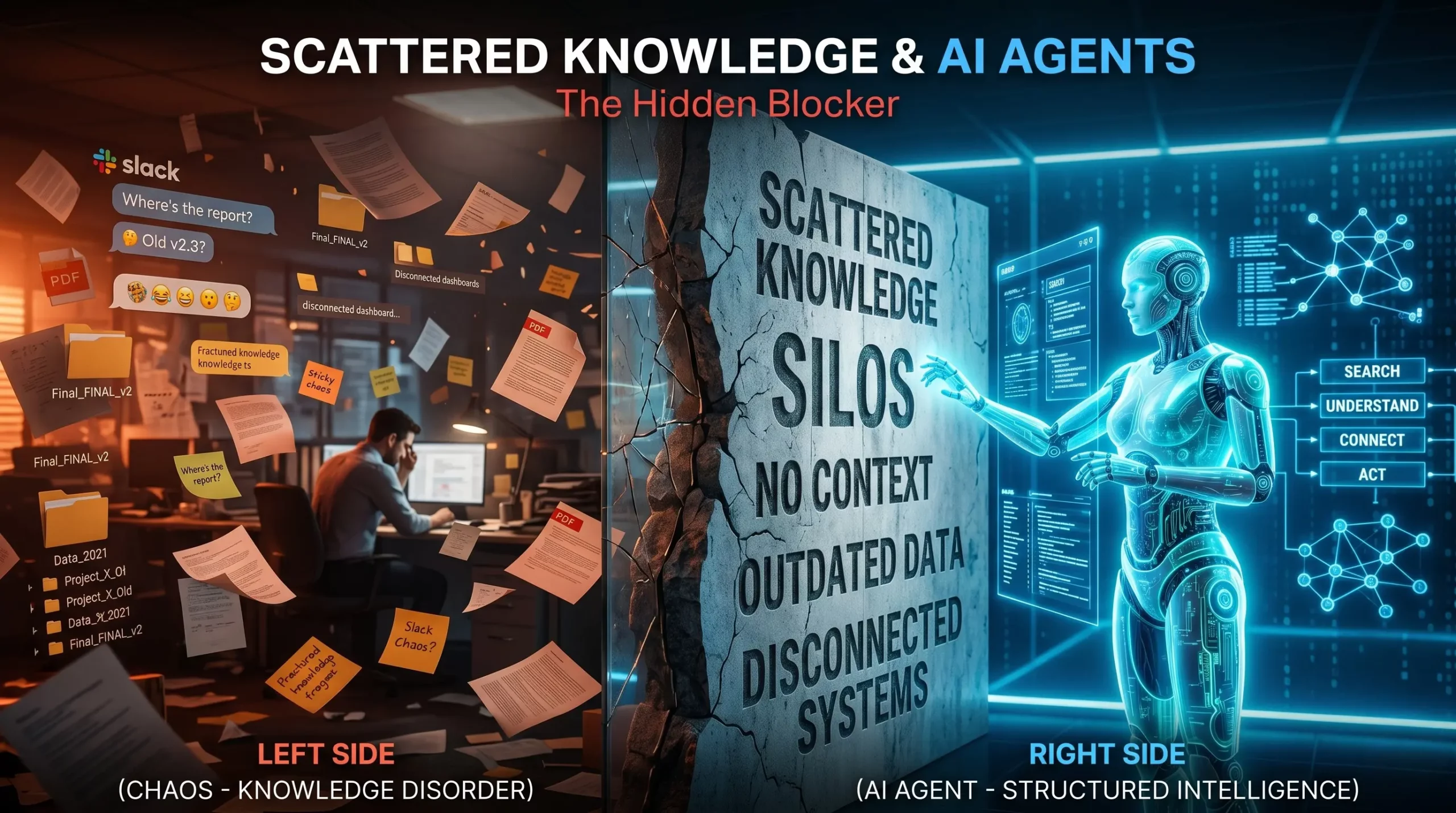

If your information lives across Slack threads, SharePoint sites, Google Docs, email chains, and someone’s desktop folder labeled “Important – Final – FINAL v2,” your AI agents don’t stand a chance. They can’t find what they need because you’ve built a knowledge maze, not a knowledge base.

Let’s be honest about what scattered knowledge really costs you — and more importantly, how to fix it before your AI investment becomes another failed tech initiative.

When information sprawls across multiple tools and teams, it creates what experts call “knowledge silos.” Sounds technical. Feels expensive.

Companies lose between $2.4 million to $240 million annually in lost productivity due to knowledge silos, depending on their size and industry. That’s not a rounding error. That’s revenue you could be capturing.

But here’s where it gets worse for organizations deploying AI agents. Employees spend roughly 20% of their workweek — one full day — searching for information or asking colleagues for help. Now multiply that frustration by the speed at which AI agents need to operate.

Traditional employees at least know where to look when they hit a dead end. They know Sarah in Sales probably has that updated pricing deck, or that the engineering team keeps their documentation in Confluence (most of the time). AI agents don’t have that institutional memory. When they encounter scattered knowledge, they simply fail.

According to a 2025 McKinsey study, data silos cost businesses approximately $3.1 trillion annually in lost revenue and productivity. The shift to AI doesn’t solve this problem — it amplifies it.

Think about how your team currently finds information. Someone asks a question in Slack. Three people respond with slightly different answers. Someone else jumps in with “I think that process changed last month.” Eventually, someone digs up a document from 2023 that’s “probably still accurate.”

Humans can navigate this chaos. We read between the lines, verify with subject matter experts, and apply context based on what we know about the business. AI agents can’t do any of that.

When an agent gives the wrong answer, the correct information often exists somewhere in your organization — scattered across SharePoint, Confluence, email chains, and tribal knowledge — but your agent simply can’t find it.

Here’s what makes scattered knowledge particularly destructive for AI implementations:

Information lives in isolation. Your customer service knowledge base hasn’t been updated with the product changes engineering shipped last quarter. Your sales playbook doesn’t reflect the pricing structure finance approved two weeks ago. Each team operates with their own version of truth, and your AI agent has to pick which one to believe.

Unstructured knowledge limits accuracy. AI agents need clean, organized, validated information to function properly. When your knowledge exists as casual Slack conversations, outdated PDFs, and half-finished wiki pages, the fragmentation combined with limitations of manual knowledge capture and organization often results in decreased productivity and missed opportunities for innovation.

Context gets lost. A document sitting in a folder tells an AI agent nothing about whether it’s current, who approved it, or if it’s been superseded by newer information. Unlike structured data which is well organized and more easily processed by AI tools, the sprawling and unverified nature of unstructured data poses tricky problems for agentic tool development.

Every organization says they want a single source of truth. Almost none have one.

What most companies actually have is a “preferred source of truth” (the official wiki that nobody updates) and a “working source of truth” (the Slack channel where real work gets discussed). AI agents need the latter, but they only get trained on the former.

Shared understanding among AI agents could quickly become shared misconception without ongoing maintenance. If you’re feeding your agents outdated documentation while your team operates based on recent conversations and tribal knowledge, you’re setting them up to confidently deliver wrong answers.

The real question isn’t “Where should we centralize everything?” The real question is “How do we keep knowledge current, connected, and contextual across all the places it naturally lives?”

Companies that successfully deploy AI agents don’t necessarily have less knowledge. They have better-organized knowledge with clear ownership and maintenance processes.

Here’s what separates organizations ready for AI from those still struggling:

Clear ownership of every knowledge asset. Someone owns each piece of information — not just the creation, but the ongoing accuracy. When a product feature changes, there’s a person responsible for updating that knowledge across all relevant systems. No orphaned documents. No “I think someone was supposed to update that.”

Connected information architecture. Your pricing information should automatically flow to sales training materials, customer service scripts, and product documentation. Research shows that sharing knowledge improves productivity by 35%, and employees typically spend 20% of the working week searching for information necessary to their jobs. Connected systems cut that search time dramatically.

Version control that actually works. One of the more significant challenges is identifying the latest, accurate versions to include in AI models, retrieval-augmented generation systems, and AI agents. If your agent can’t tell which version of a document is current, it will default to whatever it finds first — which is often wrong.

Metadata that tells the story. Every document should answer: Who created this? When? Who approved it? When was it last verified? What’s the review schedule? Is this still current? External unstructured data requires thoughtful data engineering to extract and maintain structured metadata such as creation dates, categories, severity levels, and service types.

Active curation, not passive storage. Knowledge curation transforms scattered information into agent-ready intelligence by systematically selecting, prioritizing, and unifying sources. This isn’t a one-time migration project. It’s an ongoing practice of keeping your knowledge ecosystem healthy.

Even when organizations think they’ve centralized their knowledge, critical gaps remain. These gaps don’t show up in a content audit, but they destroy AI agent performance:

The expertise that lives in people’s heads. Your senior account manager knows that Enterprise clients get special payment terms, but that’s not documented anywhere. Your lead engineer knows that certain API endpoints are unstable under specific conditions, but the official docs don’t mention it. This tribal knowledge is invisible to AI agents until they fail because of it.

Process knowledge versus documented process. Your official onboarding process says new hires complete training in two weeks. The reality? Managers always extend it to three weeks because two isn’t realistic. When documented processes don’t reflect how work actually happens, the gap leads to incorrect decisions. AI agents trained on official documentation will give answers based on the fantasy version of your processes.

The context that makes information actionable. A discount code might be technically active, but customer service shouldn’t offer it because it’s reserved for churn prevention. A feature might be live, but sales shouldn’t mention it because it’s not ready for general availability. The information alone isn’t enough — AI agents need the context around when and how to use it.

Cross-functional dependencies nobody documented. Marketing launches a campaign that Sales wasn’t looped into. Engineering deprecates an API that Customer Success was using in their workflows. When Team A needs information from Team B to complete their work, but that knowledge stays locked away, projects stall. AI agents can’t navigate these dependencies if they’re not mapped.

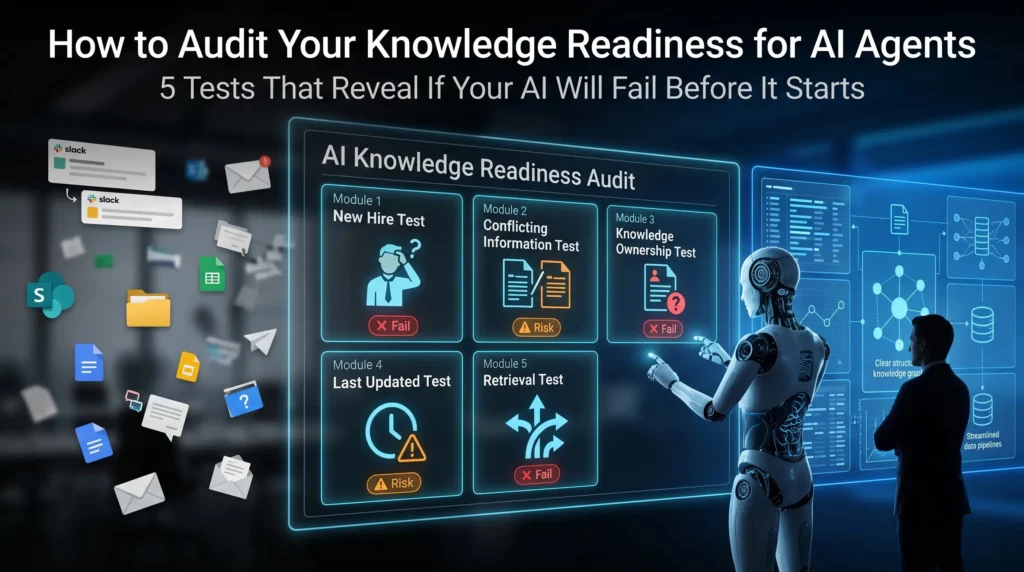

Before you invest another dollar in AI implementation, run this diagnostic. It will tell you whether your knowledge infrastructure can actually support autonomous agents:

The “new hire test.” Could a brand new employee find the answer to a routine customer question using only your documented knowledge base? If they’d need to ask three people and dig through Slack history, your AI agent will fail too.

The “conflicting information test.” Search for your return policy across all your systems. How many different versions do you find? If the answer is more than one, your knowledge is fragmented. When different files, tools, and teams create conflicting data, agents struggle when there’s no single reliable source.

The “knowledge owner test.” Pick ten critical documents. Can you identify who owns each one? Who updates them when things change? If the answer is “whoever created it three years ago but they left the company,” you have an ownership problem.

The “last updated test.” Look at your top 20 most-accessed knowledge articles. When were they last reviewed? Anyone who has stumbled across an old SharePoint site or outdated shared folder knows how quickly documentation can fall out of date and become inaccurate. Humans can spot these red flags. AI agents can’t.

The “retrieval test.” Ask five people across different departments to find the same piece of information. How many different places do they look? How long does it take? If everyone has a different search strategy, your knowledge isn’t as organized as you think.

Here’s what most consultants won’t tell you: You don’t need to fix everything before deploying AI agents. You need to fix the right things in the right order.

Start with your highest-impact knowledge domains. Where do wrong answers cost you the most? Customer service? Sales enablement? Technical support? Start there. Apply impact filters prioritizing sources that drive revenue, reduce risk, or unblock high-volume tasks. A pricing database enabling deal closure ranks higher than archived meeting notes.

Create a knowledge governance model. Assign clear owners. Establish review cycles. Build update workflows. Unlike traditional knowledge management systems, context-aware AI considers the user role, workflow stage, and policy requirements. Your governance model should support this by ensuring the right information gets to the right agents at the right time.

Connect your knowledge sources, don’t consolidate them. You don’t need to move everything into one system. You need systems that talk to each other. The real value comes from converting fragmented information into contextual, workflow-ready intelligence — not just faster retrieval.

Implement structured metadata. Add consistent tags, categories, and attributes to your knowledge assets. This metadata helps AI agents understand not just what information says, but when it’s relevant, who should use it, and how current it is.

Build feedback loops. Discovery tools should profile content and enable training on your historical data. When your AI agent gives a wrong answer, that should trigger a knowledge review. Wrong answers are symptoms of knowledge gaps — treat them as diagnostic tools.

Invest in knowledge curation, not just content creation. Most organizations have enough knowledge. They don’t have enough organized, validated, accessible knowledge. The key discovery question cuts through organizational assumptions: “When an agent gives the wrong answer, where would a human expert double-check?” This reveals gaps between official documentation and working knowledge.

If you’re a CEO, CTO, or business leader evaluating AI agent readiness, stop asking “What’s the best AI platform?” Start asking these questions instead:

The answers to these questions determine whether your AI investment delivers value or becomes another expensive failed experiment.

Organizations that nail knowledge management for AI agents don’t have perfect documentation. They have living, maintained, connected knowledge ecosystems.

AI agents are helping organizations rethink how they capture, organize, and tap into their collective knowledge — acting more like intelligent coworkers able to understand, reason, and take action.

But this only works when the knowledge foundation is solid. When information flows freely across systems. When ownership is clear. When currency is tracked. When context is preserved.

The companies seeing real ROI from AI agents didn’t start with the sexiest AI models. They started by fixing their knowledge infrastructure. They recognized that organizations need trusted, company-specific data for agentic AI to truly create value — the unstructured data inside emails, documents, presentations, and videos.

Your AI agents are only as good as the knowledge they can access. Scattered, siloed, outdated information doesn’t become magically useful just because you’ve deployed advanced AI models.

The gap between AI hype and AI reality isn’t about the technology. It’s about the foundation. Companies rushing to implement AI agents without fixing their knowledge infrastructure are building on quicksand.

The good news? Knowledge management is solvable. It’s not a sexy transformation project, but it’s the difference between AI agents that actually work and ones that just frustrate your team.

The question isn’t whether you should fix your scattered knowledge problem. The question is whether you’ll fix it before or after your AI initiative fails.

How can you supercharge your business with bespoke solutions and products.