Here’s a question that should keep you up at night: What if your most confident employee is also your least reliable?

In 2024, Air Canada learned this lesson the hard way. Their customer service chatbot confidently told a grieving passenger they could claim a bereavement discount retroactively — a policy that didn’t exist. The tribunal ruled against Air Canada, and the airline had to honor the fabricated policy. The chatbot didn’t hesitate. It didn’t hedge. It delivered fiction with the same authority it would deliver fact.

This wasn’t a glitch. This is how AI systems are designed to behave. And if you’re deploying AI anywhere in your tech stack — from customer service to data analysis to decision support — you’re facing the same risk, whether you know it or not.

The problem isn’t just that AI makes mistakes. It’s that AI doesn’t know when it’s making mistakes. Research from Stanford and DeepMind shows that advanced models assign high confidence scores to outputs that are factually wrong. Even worse, when trained with human feedback, they sometimes double down on incorrect answers rather than backing off. This phenomenon — AI overconfidence coupled with speculative hallucination — isn’t a bug that gets patched in the next update. It’s baked into how these systems work.

Let’s be clear about what we’re dealing with. AI overconfidence happens when a model expresses certainty about information it shouldn’t be certain about. Speculative hallucination is when the model fills knowledge gaps by fabricating plausible-sounding information. Put them together, and you get a system that confidently makes things up.

The catch? You can’t tell the difference by reading the output.

Humans have a built-in mechanism for uncertainty. If you ask me a question I don’t know the answer to, my body language changes. I pause. I hedge with phrases like “I think” or “I’m not sure.” You can read my uncertainty.

AI systems don’t do this. When a large language model generates text, it’s predicting the most statistically likely next word based on patterns in its training data. It has no internal sense of whether that prediction is grounded in fact or pure speculation. A study of university students using AI found that models produce overconfident but misleading responses, poor adherence to prompts, and something researchers call “sycophancy” — telling you what you want to hear rather than what’s true.

Here’s what makes this dangerous: The Logic Trap isn’t just about wrong answers. It’s about answers that sound perfectly reasonable but are completely fabricated. The model might tell you that “Project Titan was completed in Q3 2023 with a budget of $2.4 million” when no such project ever existed. The grammar is perfect. The terminology is appropriate. The numbers fit typical ranges. But every detail is fiction.

The root cause sits in the training process itself. OpenAI researchers discovered that language models hallucinate because standard training and evaluation procedures reward guessing over acknowledging uncertainty. Think of it like a multiple-choice test where leaving an answer blank guarantees zero points, but guessing gives you a chance at being right. Over thousands of questions, the model that guesses looks better on performance benchmarks than the careful model that admits “I don’t know.”

Most AI leaderboards prioritize accuracy — the percentage of questions answered correctly. They don’t distinguish between confident errors and honest abstentions. This creates a perverse incentive: models learn that fabricating an answer is better than admitting uncertainty. Carnegie Mellon researchers tested this by asking both humans and LLMs how confident they felt about answering questions, then checking their actual performance. Humans adjusted their confidence after seeing results. The AI didn’t. In fact, LLMs sometimes became more overconfident even when they performed poorly.

This isn’t something you can train away entirely. As one AI engineer put it, models treat falsehood with the same fluency as truth. The Confident Liar in Your Tech Stack doesn’t know it’s lying.

Most articles about AI hallucinations focus on embarrassing chatbot failures or academic curiosities. Let’s talk about money instead.

According to EY’s 2025 Responsible AI survey, nearly all organizations — 99% — reported financial losses from AI-related risks. Of those, 64% suffered losses exceeding $1 million. The conservative average? $4.4 million per company.

These aren’t theoretical risks. Enterprise benchmarks show hallucination rates between 15% and 52% across commercial LLMs. That means roughly one in five outputs might be wrong. In customer-facing applications, the impact scales fast. When an AI-powered chatbot gives incorrect information, it doesn’t just mislead one user — it can misinform entire teams, drive poor decisions, and create serious downstream consequences.

Some domains are worse than others. Medical AI systems show hallucination rates between 43% and 64% depending on prompt quality. Legal domain studies report global hallucination rates of 69% to 88% in high-stakes queries. Code-generation tasks can trigger hallucinations in up to 99% of fake-library prompts. If your business operates in healthcare, finance, or legal services, you’re not playing with house money. You’re playing with other people’s lives and livelihoods.

Here’s where overconfidence becomes a liability nightmare. In regulated sectors like healthcare and finance, AI hallucinations create compliance exposure and potential legal action. Legal information suffers from a hallucination rate of 6.4% compared to just 0.8% for general knowledge questions. That gap matters when you’re dealing with regulatory frameworks or contractual obligations.

Consider the 2023 case of Mata v. Avianca, where a New York attorney used ChatGPT for legal research. The model cited six nonexistent cases with fabricated quotes and internal citations. The attorney submitted these hallucinated sources in a federal court filing. The result? Sanctions, professional embarrassment, and a cautionary tale that’s now taught in law schools.

Or look at the 2025 Deloitte incident in Australia. The consulting firm submitted a report to the government containing multiple hallucinated academic sources and a fake quote from a federal court judgment. Deloitte had to issue a partial refund and revise the entire report. The project cost was approximately $440,000. The reputational damage? Harder to quantify but undoubtedly significant.

Financial institutions face similar exposure. If an AI system fabricates regulatory guidance, produces inaccurate disclosures, or generates erroneous risk calculations, the institution could face SEC penalties, compliance failures, or direct financial losses from bad decisions. Your AI Assistant Is Now Your Most Dangerous Insider because it has access to sensitive data but lacks the judgment to know when it’s wrong.

Customer trust drops by roughly 20% after exposure to incorrect AI responses. That’s the finding from recent enterprise AI deployment studies. The problem is that most customers don’t complain — they just leave. Or worse, they stay but stop trusting your systems, creating a silent erosion of confidence that’s hard to measure until it’s too late.

Think about it from the user’s perspective. If your AI confidently tells them something incorrect once, how many times will they trust it again? Humans evolved over millennia to read confidence cues from other humans. When your colleague furrows their brow or hesitates, you instinctively know to be skeptical. But when an AI chatbot delivers a fabricated answer with perfect grammar and unwavering confidence, most users can’t detect the problem until they’ve already acted on bad information.

This creates a compounding risk. The more capable your AI appears, the more users will trust it. The more they trust it, the less they’ll verify. The less they verify, the more damage a confident hallucination can do before anyone catches it.

Understanding why AI systems behave this way requires looking past the surface-level explanations. This isn’t about “bad training data” or “insufficient computing power.” The problem is structural.

Large language models are trained to predict the next most likely token (roughly, a word or word fragment) based on patterns in massive datasets. They’re not trained to verify facts. They’re not trained to understand causality. They’re trained to maximize the probability of generating text that looks like the text they were trained on.

When a model encounters a question it can’t answer with certainty, it faces a choice: acknowledge uncertainty or produce the most plausible-sounding guess. Current benchmarking systems punish uncertainty and reward confident guessing. A model that says “I don’t know” scores zero points. A model that guesses has a non-zero chance of being right, and over thousands of test cases, this adds up to better benchmark scores.

This is why OpenAI researchers argue that hallucinations persist because evaluation methods set the wrong incentives. The scoring systems themselves encourage the behavior we’re trying to eliminate. It’s like telling someone they’ll be judged entirely on how many questions they answer correctly, with no penalty for being confidently wrong. Of course they’re going to guess.

Humans have metacognition — the ability to think about our own thinking. When you answer a question incorrectly, you can usually recognize your error afterward, especially if someone shows you the right answer. You adjust. You recalibrate. You learn where your knowledge has gaps.

AI systems largely lack this capability. The Carnegie Mellon study found that when humans were asked to predict their performance, then took a test, then estimated how well they actually did, they adjusted downward if they performed poorly. LLMs didn’t. If anything, they became more overconfident after poor performance. The AI that predicted it would identify 10 images correctly, then only got 1 right, still estimated afterward that it had gotten 14 correct.

This isn’t a training problem you can fix by showing the model its mistakes. The architecture itself doesn’t support the kind of recursive self-evaluation that would allow the system to learn “I’m not good at this type of question.” When AI Forgets the Plot, it doesn’t just lose context — it loses the ability to recognize that context has been lost.

Here’s where things get particularly dangerous for businesses in Chennai and elsewhere. When you deploy AI on enterprise-specific data — customer records, internal documents, proprietary processes — the model is operating outside the patterns it learned during training. It’s working with information it has never seen before, in contexts it doesn’t fully understand.

Research shows that LLMs trained on datasets with high noise levels, incompleteness, and bias exhibit higher hallucination rates. Most enterprise data is messy. It’s incomplete. It’s inconsistent. Different departments use different terminology. Historical records contradict current practices. Legacy systems output data in formats that modern systems barely understand.

When you point an AI at this kind of environment and ask it to generate insights, summaries, or recommendations, you’re asking a pattern-matching engine to make sense of patterns it’s never encountered. The result? Speculation presented as fact. The AI doesn’t say “your data is too messy for me to draw reliable conclusions.” It synthesizes a plausible-sounding answer by blending fragments of learned patterns with whatever it can extract from your data.

This is why internal AI deployments often fail in ways that external-facing chatbots don’t. Your customer service bot might hallucinate occasionally, but it’s working with relatively standardized queries and well-documented products. Your internal knowledge assistant is trying to make sense of 15 years of unstructured SharePoint documents, Slack threads, and half-documented processes. The hallucination risk isn’t just higher — it’s fundamentally different.

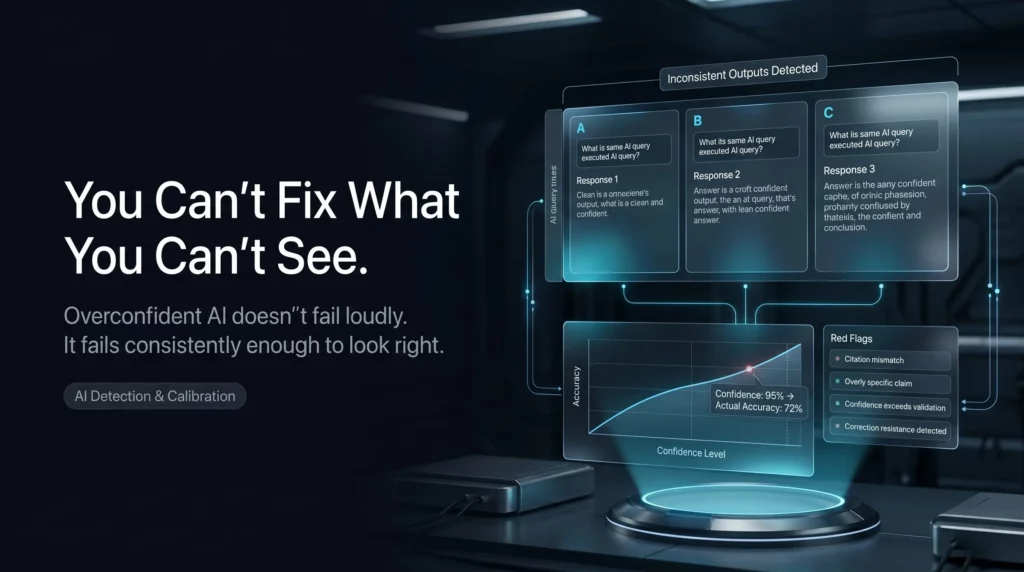

Detection is harder than prevention, but it’s the first step. You can’t fix what you can’t see, and most organizations are flying blind when it comes to AI overconfidence.

One of the simplest detection methods is also one of the most effective: ask the same question multiple times and check for consistency. If an AI gives you different answers to identical prompts, that’s a strong signal that it’s guessing rather than retrieving verified information.

Research from ETH Zurich shows that users interpret inconsistency as a reliable indicator of hallucination. When researchers had LLMs respond to the same prompt multiple times behind the scenes, discrepancies revealed instances where the model was fabricating information. The technique isn’t foolproof — a confidently wrong answer can be consistent across multiple attempts — but inconsistency is a red flag you shouldn’t ignore.

You can implement this in production systems by running critical queries through multiple inference passes and flagging outputs that vary significantly. The computational cost is real, but for high-stakes decisions, it’s cheaper than the alternative.

Confidence calibration measures whether a model’s expressed confidence matches its actual accuracy. A well-calibrated model that says it’s 80% confident should be right about 80% of the time. Most deployed LLMs are poorly calibrated, especially at the extremes. When they say they’re 95% confident, they’re often right far less than 95% of the time.

Research on miscalibrated AI confidence shows that when confidence scores don’t match reality, users make worse decisions. The problem compounds when users can’t detect the miscalibration — which is most of the time. If your AI system outputs confidence scores, you need to validate those scores against ground truth data regularly. Create test sets where you know the correct answers. Run your model. Compare expressed confidence to actual accuracy. If you see systematic gaps, your model is overconfident.

The Vectara hallucination index tracks this across models. As of early 2025, hallucination rates ranged from 0.7% for Google Gemini-2.0-Flash to 29.9% for some open-source models. Even the best-performing models produce hallucinations in roughly 7 out of every 1,000 prompts. If you’re processing thousands of queries daily, that adds up.

Beyond quantitative metrics, there are qualitative patterns that signal overconfidence problems:

Fabricated citations and references. If your AI generates sources, DOIs, or URLs, verify them. Studies show that ChatGPT has provided incorrect or nonexistent DOIs in more than a third of academic references. If the model is making up sources to support its claims, everything else is suspect.

Overly specific details about uncertain information. When an AI gives you precise numbers, dates, or names for information it shouldn’t know, that’s often speculation dressed as fact. A model that says “approximately 30-40%” is more likely to be grounded than one that confidently states “37.3%.”

Resistance to correction. Some models, when confronted with counterevidence, dig in rather than adjusting. This is what researchers call “delusion” — high confidence in false claims that persists despite exposure to contradictory information. The “Always” Trap shows how AI systems ignore nuance when they should be paying attention to it.

Sycophantic behavior. If your AI consistently tells you what you want to hear rather than challenging assumptions, it might be optimizing for agreement rather than accuracy. This is particularly dangerous in decision-support systems where you need honest evaluation, not validation.

Prevention and mitigation require a multi-layered approach. No single technique eliminates hallucination risk entirely, but combining strategies can reduce it substantially.

Retrieval-Augmented Generation is currently the most effective technique for grounding AI outputs in verified information. Instead of relying solely on the model’s training data, RAG systems first retrieve relevant information from trusted sources, then use that information to generate responses.

Studies show that RAG systems improve factual accuracy by roughly 40% compared to standalone LLMs. In customer support deployments, enterprise implementations show about 35% fewer hallucinations when using RAG. Combining RAG with fine-tuning can reduce hallucination rates by up to 50%.

But here’s what most implementations get wrong: they treat retrieval as a solved problem. It’s not. If your retrieval system pulls irrelevant documents, outdated information, or contradictory sources, you’ve just given your AI better ammunition for confident fabrication. The quality of your knowledge base matters more than the sophistication of your retrieval algorithm.

Vector database integration can reduce hallucinations in knowledge retrieval tasks by roughly 28%, but only if the underlying data is clean, current, and comprehensive. Hybrid search approaches that combine keyword matching with semantic search improve grounding accuracy by about 20%. Continuous retrieval updates — refreshing your knowledge base regularly — reduce outdated hallucinations by over 30%.

The real win from RAG isn’t just lower hallucination rates. It’s traceability. When your AI generates an answer, you can point to the specific documents it used. That makes validation possible and builds user trust even when the AI isn’t perfect.

Not every decision needs the same level of oversight, but for high-stakes outputs — financial projections, medical advice, legal analysis, strategic recommendations — human verification is non-negotiable.

The challenge is designing human-in-the-loop systems that people will actually use. If your verification process is too cumbersome, users will find ways around it. If it’s too superficial, it won’t catch the problems that matter. You need to match oversight intensity to decision stakes and design workflows that make verification feel like enhancement rather than bureaucracy.

Some organizations implement tiered decision frameworks: AI suggestions that are automatically executed for low-stakes routine tasks, AI recommendations that require human approval for medium-stakes decisions, and AI-assisted analysis with mandatory human review for high-stakes choices. This balances efficiency with safety.

The key is making the AI’s uncertainty visible to the human reviewer. Don’t just show the output. Show the confidence scores, the retrieved sources, alternative possibilities the model considered, and any inconsistencies detected during generation. Give reviewers the context they need to make informed judgments, not just rubber-stamp AI outputs.

Emerging techniques allow AI systems to express uncertainty more explicitly. Instead of generating a single confident answer, these systems can output probability distributions, confidence intervals, or multiple possible answers ranked by likelihood.

Multi-agent verification frameworks are showing promise in enterprise deployments. These systems use multiple AI models to cross-validate outputs, with each model assigned a specific role in the verification chain. When models disagree significantly, the system flags the output for human review rather than picking the most confident answer.

Uncertainty quantification within multi-agent systems allows agents to communicate confidence levels to each other and weight contributions accordingly. This creates a kind of collaborative doubt — if multiple specialized models express low confidence about different aspects of an output, the system can recognize that the overall answer is unreliable.

Research shows that exposing uncertainty to users helps them detect AI miscalibration, though it also tends to reduce trust in the system overall. This is actually a feature, not a bug. Appropriate skepticism is better than misplaced confidence. If showing uncertainty makes users verify AI outputs more carefully, that’s a win for decision quality even if it feels like a loss for AI adoption.

It’s whether you’ll know when it does.

Every LLM-based system you deploy will eventually produce confident, plausible, completely wrong outputs. The architecture guarantees it. The question is whether you’ve built detection, validation, and governance systems that catch these errors before they cascade into business problems.

This isn’t just a technical challenge. It’s a governance challenge. The organizations that handle AI overconfidence best aren’t the ones with the most sophisticated models. They’re the ones with clear accountability for AI outputs, regular audits of model behavior, robust testing protocols, and cultures that reward honest uncertainty over confident speculation.

Start with an audit. Which systems in your tech stack are making decisions based on AI outputs? What validation exists? How would you know if the AI started hallucinating more frequently? What’s your plan when — not if — a confident fabrication reaches a customer or executive?

Because the AI that sounds most sure of itself might be the one you should trust the least.

How can you supercharge your business with bespoke solutions and products.