You just signed off on a $250,000 base salary for a senior AI engineer. The board is thrilled. Your investors are satisfied that you are finally checking the generative AI box. You think you just bought a massive wave of innovation that will propel your company into the next decade.

Let’s be honest. What you actually bought is a cultural hand grenade.

Within three weeks, the CFO will be sweating over the cloud compute bills, the CTO will be nervous about data governance, and your traditional software development team will be on the verge of an open revolt. Gartner recently highlighted the massive spike in the cost and demand for AI talent compared to traditional IT, but the real cost isn’t the salary—it’s the friction.

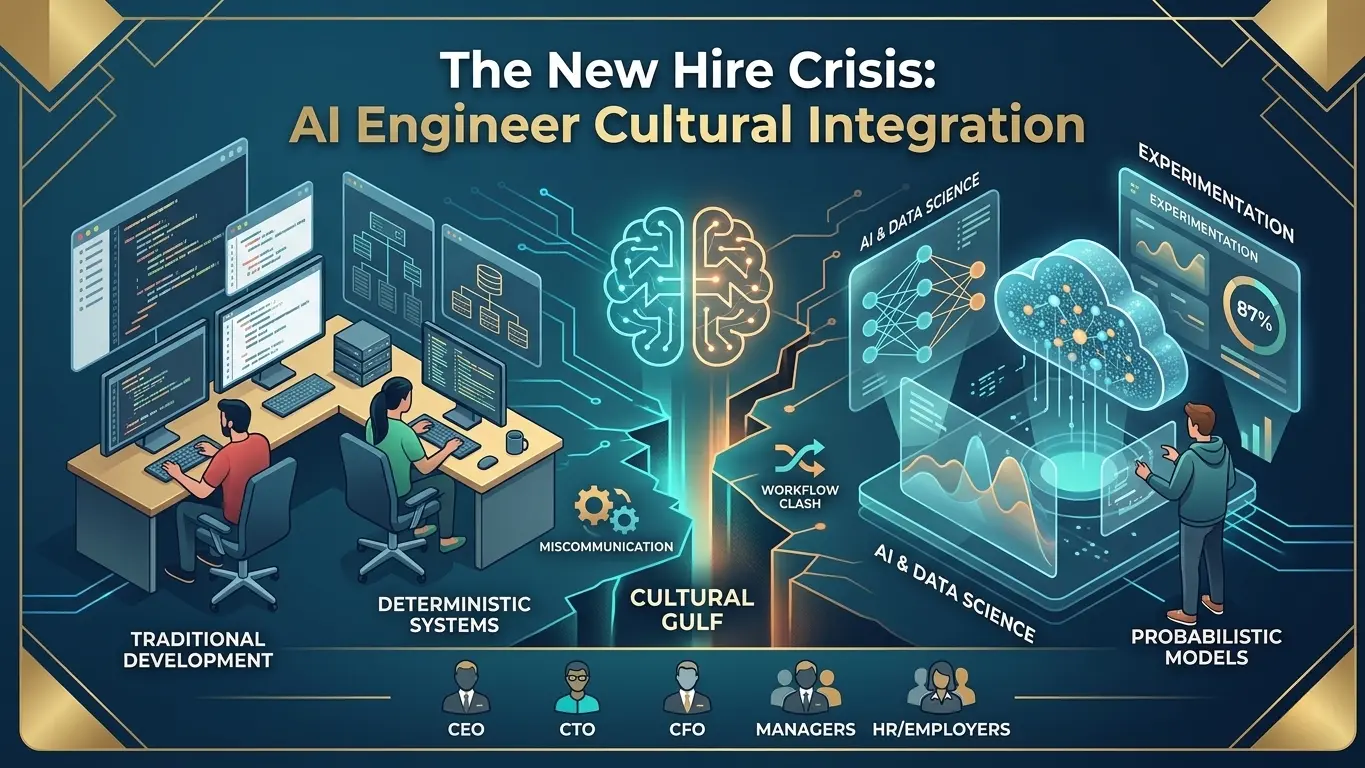

The reality of enterprise AI talent acquisition is that bringing an AI specialist into a legacy engineering department is like dropping a rogue artist into an accounting firm. They do not speak the same language. They do not measure success the same way. And if top management does not intervene, the clash will stall your entire digital roadmap.

If you are a CEO or CTO trying to modernize your tech stack, your biggest hurdle isn’t the technology itself. It is AI engineer cultural integration. Here is exactly why this new breed of developer is breaking your company culture, and the operational playbook you need to disarm the tension.

To understand why your engineering floor is suddenly a warzone, you have to understand the fundamental psychological difference between an AI developer vs software engineer.

Traditional software engineers are deterministic thinkers. They build bridges. In their world, if you write a specific piece of code and input “A,” the system must output “B” with 100% certainty, every single time. Their entire career has been measured by predictability, uptime, and rigorous testing environments. If a bridge falls down 1% of the time, it is a catastrophic failure.

AI engineers, on the other hand, are probabilistic thinkers. They do not build bridges; they forecast the weather. In their world, if you input “A,” the system will output “B” with an 87% confidence interval, and occasionally it will output “C” because the neural network weighted a hidden variable differently today.

When you force a probabilistic thinker to work inside a deterministic system, chaos ensues. The traditional engineers view the AI engineers as reckless cowboys introducing massive instability into their pristine codebase. The AI engineers view the traditional team as slow, bureaucratic dinosaurs blocking innovation.

This friction is exactly why AI transformations fail before they ever reach production. According to frameworks developed by MIT Sloan, managing data scientists and AI specialists requires a completely different operational environment than managing standard DevOps teams. If you apply legacy software rules to probabilistic models, you will crush the innovation you just paid a premium to acquire.

Over the last decade, enterprise CTOs have worked incredibly hard to kill the “move fast and break things” mentality. We implemented strict CI/CD pipelines, robust QA testing, and zero-trust security architectures. We decided that moving fast wasn’t worth it if it meant breaking client trust or leaking proprietary data.

Then, generative AI arrived, and it resurrected the cowboy coding culture overnight.

What most people miss is that many modern AI engineers are used to operating in highly experimental, unstructured environments. They are used to tweaking prompts, adjusting weights, and rapidly iterating until the output looks “good enough.”

This AI workflow disruption is terrifying for a veteran CTO. You cannot build enterprise software based on good vibes. We have to urgently transition from vibe coding to spec-driven development. When an AI model generates an output, it isn’t just a fun experiment—it might be an automated decision executing a financial transaction or sending a client email.

If the AI engineer’s primary goal is rapid experimentation, and the security architect’s primary goal is risk mitigation, top management must step in to referee. You cannot leave them to figure it out themselves.

While the CTO is fighting fires in the codebase, the CFO is staring at a massive financial black hole.

Managing AI engineering teams is notoriously difficult because traditional productivity KPIs fail miserably when applied to AI development. For a standard software engineer, you can track sprint points, Jira tickets resolved, and lines of code committed. You know exactly what you are getting for their salary.

How do you measure the output of an AI engineer? They might spend three weeks staring at a screen, adjusting the context window of a Large Language Model, and seemingly producing nothing of value. Then, on a Tuesday afternoon, they tweak a single parameter that suddenly automates a workflow saving the company $400,000 a year.

Because the workflow is highly experimental, the ROI is ambiguous and lumpy. This causes massive friction with the rest of the company. Your senior full-stack developers, who have been with the company for five years, are watching the new 25-year-old AI hire pull a higher base salary while seemingly ignoring all the standard sprint deadlines.

If management does not clearly define what “success” looks like for the AI team, resentment will rot your engineering culture from the inside out.

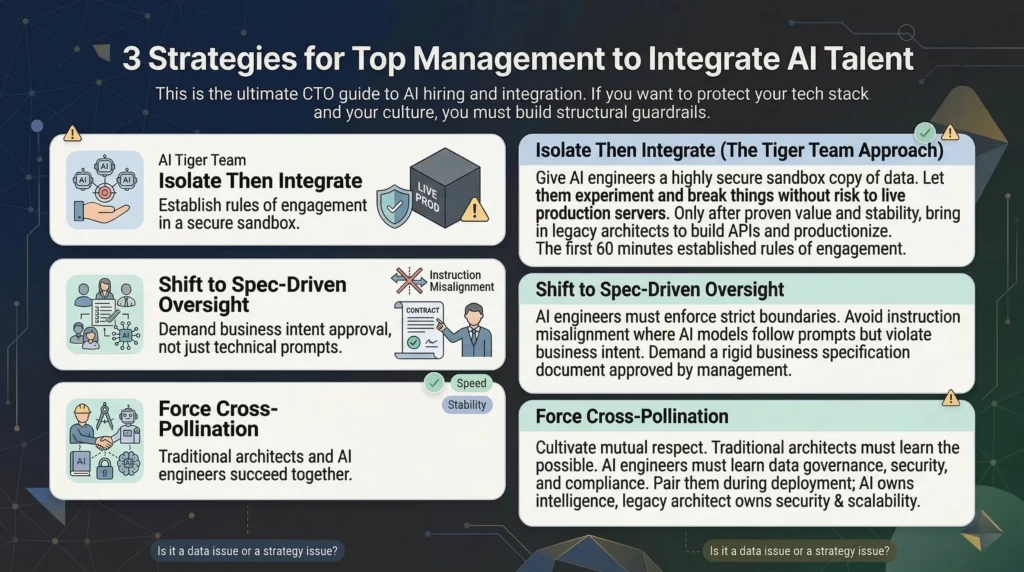

This is the ultimate CTO guide to AI hiring and integration. If you want to protect your tech stack and your culture, you have to build structural guardrails.

Do not drop a new AI engineer directly into your legacy software team and tell them to “collaborate.” It will fail.

Instead, use a Tiger Team approach. Give your AI engineers a highly secure, isolated sandbox environment with a copy of your structured data. Let them experiment, break things, and build proof-of-concept models without any risk of taking down your live production servers.

Remember, the first 60 minutes of deployment establish the rules of engagement. Only after an AI model has proven its value and stability in the sandbox should you bring in the traditional engineering team to harden the code, build the APIs, and push it to production.

Your AI engineers must understand that “almost correct” is completely unacceptable in an enterprise environment. You must enforce strict boundaries around what the AI is allowed to do.

If you let AI talent run wild without business logic constraints, you invite massive technical risks, specifically instruction misalignment. This happens when an AI model technically follows the engineering prompt but completely violates the intent of the business rule because the engineer didn’t understand the corporate context. You fix this by demanding that every AI project starts with a rigid business specification document approved by management, not just a technical prompt.

The long-term goal of AI engineer cultural integration is mutual respect.

Your traditional architects need to learn the art of the possible from your AI engineers. Conversely, your AI engineers desperately need to learn data governance, security compliance, and system architecture from your veterans.

Force cross-pollination by pairing them up during the deployment phase. The AI engineer owns the intelligence of the model; the legacy architect owns the security and scalability of the pipeline. They succeed or fail together.

The root of the new hire crisis often starts in the interview room. A successful hiring AI talent strategy requires throwing out your old tech assessments.

Stop testing candidates on basic Python syntax or their ability to recite machine learning algorithms from memory. AI tools can already write perfect code. What you need to test for is “systems thinking.”

Recent studies from Harvard Business Review indicate that the most successful enterprise AI deployments are led by engineers who understand business logic, risk management, and outcome-based design.

During the interview, give the candidate a messy, ambiguous business problem. Ask them how they would validate the data, how they would measure model drift over six months, and how they would explain a hallucination to the CFO.

If they only want to talk about parameters and model sizes, pass on them. If they start talking about data pipelines, auditability, and guardrails, make the offer.

Hiring an AI engineer is not just an HR objective; it is a fundamental operational redesign.

The companies that win the next decade will not be the ones who hoard the most expensive AI talent. The winners will be the companies whose top management successfully bridges the gap between the probabilistic innovators and the deterministic operators.

Stop letting your tech teams fight a silent cultural war. Acknowledge the friction, establish the new rules of engagement, and turn that cultural hand grenade into the engine that actually drives your business forward.

How can you supercharge your business with bespoke solutions and products.