Your AI just handed you a research summary. It cited three academic papers, a Harvard study, and a 2021 legal case. Everything looks legitimate. The references are formatted correctly. The author names sound real.

None of them exist.

That’s fabricated sources hallucination and it’s arguably the most deceptive form of AI error that enterprise teams face today. Unlike a factual mistake that a subject-matter expert might catch, a fabricated citation is specifically designed by the model’s architecture to look right but be completely wrong. It pattern-matches what a real source looks like without any actual source behind it.

Here’s what most people miss: this isn’t rare. It isn’t a fringe edge case. And it’s already cost organizations far more than they’ve publicly admitted.

Fabricated sources hallucination occurs when a large language model (LLM) invents research papers, legal cases, journal articles, URLs, expert quotes, or authors that appear entirely credible but cannot be verified anywhere in reality.

The model doesn’t “look up” a source and misremember it. It generates one from scratch constructing a plausible-sounding title, a believable author name, a realistic journal or conference, and sometimes even a DOI or URL that leads nowhere. The output looks like a properly cited reference. It behaves like one. It just doesn’t correspond to anything real.

This is distinct from a factual hallucination, where the model states an incorrect fact. In fabricated sources hallucination, the model is creating the entire evidentiary foundation the citation that’s supposed to prove the fact out of thin air.

The example from our image illustrates this precisely: an AI confidently citing “a 2021 Harvard study titled AI Moral Systems by Dr. Stephen Rowland” or referencing “State vs. DigitalMind (2019)” academic and legal references that sound completely legitimate and are completely fictional. That’s the threat.

Understanding why this happens is critical to preventing it. The cause isn’t carelessness it’s architecture.

LLMs are trained to predict the most statistically probable next token. When you ask one to produce a research summary with citations, it’s been trained on millions of documents that include properly formatted references. So it pattern-matches what a citation looks like author, title, journal, year, DOI and generates one that fits that pattern. It has no mechanism to check whether that citation actually exists. It’s not retrieving from a database. It’s generating from a learned distribution.

The problem is compounded by a finding from MIT Research in January 2025: AI models are 34% more likely to use highly confident language when generating incorrect information. The more wrong the model is, the more authoritative it sounds. Fabricated citations don’t arrive with disclaimers they arrive formatted and confident.

There are two specific patterns worth knowing:

Subtle corruption. The model takes a real paper and makes small alterations changing an author’s name slightly, paraphrasing the title, swapping the journal producing something plausible but wrong. GPTZero calls this “vibe citing”: citations that look accurate at a glance but fall apart under scrutiny.

Full fabrication. The model generates a completely non-existent author, title, publication, and identifier from scratch. No real source was consulted or distorted. The entire reference is invented.

Both patterns are optimized, structurally, to pass a quick visual review. That’s precisely why they’re so dangerous at scale.

Let’s be honest about the damage this has caused because the case record in 2025 and 2026 alone is substantial.

In legal practice. The UK High Court issued a formal warning in June 2025 after discovering multiple fictitious case citations in legal submissions some entirely fabricated, others materially inaccurate suspected to have been generated by AI without verification. The presiding judge stated directly that in the most egregious cases, deliberately placing false material before the court can constitute the criminal offence of perverting the course of justice.

In the United States, courts across jurisdictions California, Florida, Washington issued sanctions throughout 2025 for attorneys submitting AI-generated filings containing hallucinated cases. One Florida case involved a husband who submitted a brief citing approximately 11 out of 15 totally fabricated cases and then requested attorney’s fees based on one of those fictional citations. The appellate court vacated the order and remanded for further proceedings.

A California appellate court, in its first published opinion on the topic, was blunt: “There is no room in our court system for the submission of fake, hallucinated court citations.” If you want to go deeper on how citation hallucinations play out in real legal and enterprise cases, the pattern is consistent and sobering.

In academic research. GPTZero scanned 4,841 papers accepted at NeurIPS 2025 the world’s flagship machine learning conference and found at least 100 confirmed hallucinated citations across more than 50 papers. These papers had already passed peer review, been presented live, and been published. A Nature analysis separately estimated that tens of thousands of 2025 publications may include invalid AI-generated references, with 2.6% of computer science papers containing at least one potentially hallucinated citation up from 0.3% in 2024. An eight-fold increase in a single year.

In enterprise consulting. Deloitte Australia’s 2025 government report worth AU$440,000 had to be partially refunded after most of its references and several quotations were found to be pure fiction hallucinated by an AI assistant. One of the world’s largest consultancies, caught out by citations its team hadn’t verified.

In healthcare research. A study published in JMIR Mental Health in November 2025 found that GPT-4o fabricated 19.9% of all citations across six simulated literature reviews. For specialized, less publicly known topics like body dysmorphic disorder, fabrication rates reached 28–29%. In a field where citations anchor clinical decisions, that’s not a data point it’s a patient safety issue.

The real question is: how many fabricated citations haven’t been caught yet?

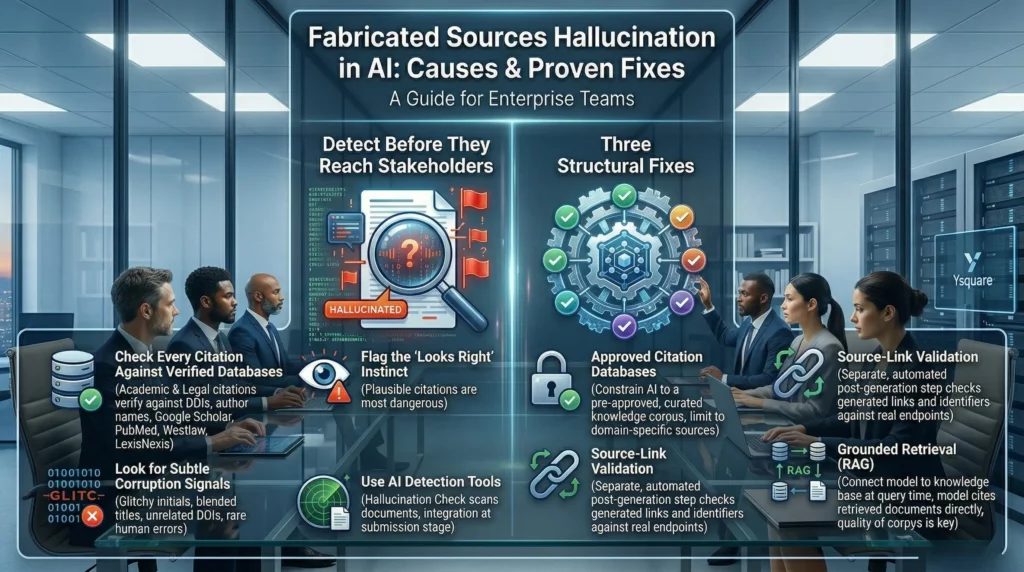

Detection is the first line of defense, and it’s more achievable than most organizations realize. The key is building verification into your workflow not treating AI output as a finished deliverable.

Check every citation against a verified database. For academic sources, that means DOIs that resolve, author names that appear in recognized scholarly databases, and titles that can be found in Google Scholar, PubMed, or equivalent. For legal citations, every case must be confirmed in Westlaw, LexisNexis, or official court records before it enters any filing or report.

Flag the “looks right” instinct. The most dangerous fabricated citations are the ones that look plausible. Train your team to be most suspicious when a reference seems particularly well-suited to the argument being made because a model generating from pattern-matching will produce references that sound relevant by design.

Look for subtle corruption signals. GPTZero’s analysis of NeurIPS 2025 papers identified specific patterns: authors whose initials don’t match their full names, titles that blend elements of multiple real papers, DOIs that resolve to unrelated documents, or publication venues that exist but never published the referenced work. These errors are rare in human-written text and common in AI-assisted drafting.

Use AI detection tools at submission stage. Tools like GPTZero’s Hallucination Check scan documents for citations that can’t be matched to real online sources and flag them for human review. ICLR has already integrated this into its formal publication pipeline. Enterprises deploying AI for research or documentation should consider equivalent verification gates.

The most reliable structural fix is constraining your AI system to generate citations only from a pre-approved, verified knowledge corpus. Rather than letting the model draw from its entire training distribution which includes patterns of what citations look like, not actual verified sources you limit it to a curated database of real, verified documents.

This is the approach behind tools like Elicit and Research Rabbit in academic contexts, and Westlaw’s AI-Assisted Research in legal practice. The model can only cite what’s actually in the approved corpus. If it can’t find a real source to support a claim, it can’t fabricate one either because fabrication requires access to the generation process, not a retrieval process.

For enterprises, this means building and maintaining a proprietary knowledge base of verified sources specific to your domain: verified regulatory documents, peer-reviewed studies, official case law, internal reports reviewed by subject-matter experts. The quality of that database directly determines the quality of the citations your AI produces.

Even when an AI system is grounded in a retrieval corpus, citation validation should be a separate, automated step in the output pipeline. Every generated reference should be checked programmatically before it reaches a human reader.

The technical approach here is elegant: assign a unique identifier to every document chunk in your knowledge base at ingestion. When the model generates a citation, it produces the identifier not a free-form reference. A post-generation verification step then confirms that the identifier matches an actual document in the corpus. Any identifier that doesn’t match flags a potential hallucination before the output is delivered.

This approach was described in detail in a 2025 framework for ghost-reference elimination: the model generates text with only the unique ID, a non-LLM method verifies that the ID exists in the database, and only then is the citation replaced with its human-readable reference. No free-form citation generation means no opportunity for free-form citation fabrication.

For organizations not building custom pipelines, source-link validation can be implemented through existing LLMOps monitoring tools that check generated URLs and DOIs against real endpoints in real time.

The third fix is the architectural foundation that makes the first two possible: Retrieval-Augmented Generation (RAG). Rather than asking a model to generate citations from memory, RAG connects the model to your verified knowledge base at query time retrieving actual documents before generating any response.

The impact on fabrication specifically is significant. When the model is generating with retrieved documents in context, it can cite those documents directly. It doesn’t need to pattern-match what a citation looks like from training data, because actual sources are present in its input. Properly implemented RAG reduces hallucination rates by 40–71% in many enterprise scenarios, and its impact on fabricated sources specifically is even more pronounced because retrieval-grounded systems have an actual source to cite.

Here’s the catch that most implementations miss: RAG is only as reliable as the knowledge base it retrieves from. A poorly maintained, outdated, or incomplete corpus produces the “hallucination with citations” failure mode where the model cites a real document that is itself outdated or misleading. Quality of the retrieval corpus is not optional infrastructure. It’s the foundation of the entire mitigation stack.

The pattern across legal, academic, and enterprise incidents is consistent: fabricated sources hallucination causes the most damage when organizations treat AI output as a finished product rather than a first draft requiring verification.

Courts have been explicit: AI assistance does not transfer accountability. Attorneys remain responsible for every citation they file. Enterprises remain responsible for every report, proposal, or analysis they submit. That accountability cannot be delegated to the model.

What changes with fabricated sources hallucination, compared to other AI risks, is the specific nature of the harm. A wrong fact can be corrected. A fabricated citation that enters a legal filing, a published paper, a client deliverable, or a regulatory submission carries its own evidentiary weight and the damage to credibility, legal standing, and institutional trust doesn’t unwind easily once it’s discovered. This is exactly the dynamic we explored in When Confident AI Becomes a Business Liability where the cost isn’t just financial, it’s reputational and structural.

The organizations that have avoided these incidents share a common posture: they treat AI outputs as requiring the same verification rigor as any other unvetted source. Not because they distrust the technology, but because they understand it.

At Ysquare Technology, we build enterprise AI pipelines with source-link validation, RAG grounded in approved citation databases, and continuous monitoring for hallucination risk precisely because fabricated sources represent the highest-stakes category of AI failure for knowledge-intensive industries. Legal, healthcare, pharma, financial services, and consulting firms can’t afford the alternative.

Fabricated sources hallucination occurs when an LLM invents citations, research papers, legal cases, or URLs that appear legitimate but cannot be verified generated from pattern-matching, not retrieval.

It’s already caused measurable damage: court sanctions across the US and UK, a Nature-documented surge in invalid academic references, a refunded AU$440,000 government consulting contract, and documented patient-safety risks in medical research.

Detection requires deliberate process: every citation must be checked against verified databases, and AI outputs should never be treated as citation-verified by default.

The three proven fixes approved citation databases, source-link validation, and RAG-grounded retrieval work best together. Each layer closes a gap the others leave open.

Accountability doesn’t transfer to the model. Every organization, firm, and practitioner remains responsible for verifying what AI produces before it carries their name.

Ysquare Technology designs enterprise AI architecture with citation integrity built in not bolted on. If your teams are deploying AI for research, legal, compliance, or knowledge management workflows, let’s talk about what verified retrieval looks like in practice.

How can you supercharge your business with bespoke solutions and products.